AUTONOMOUS SYSTEMS

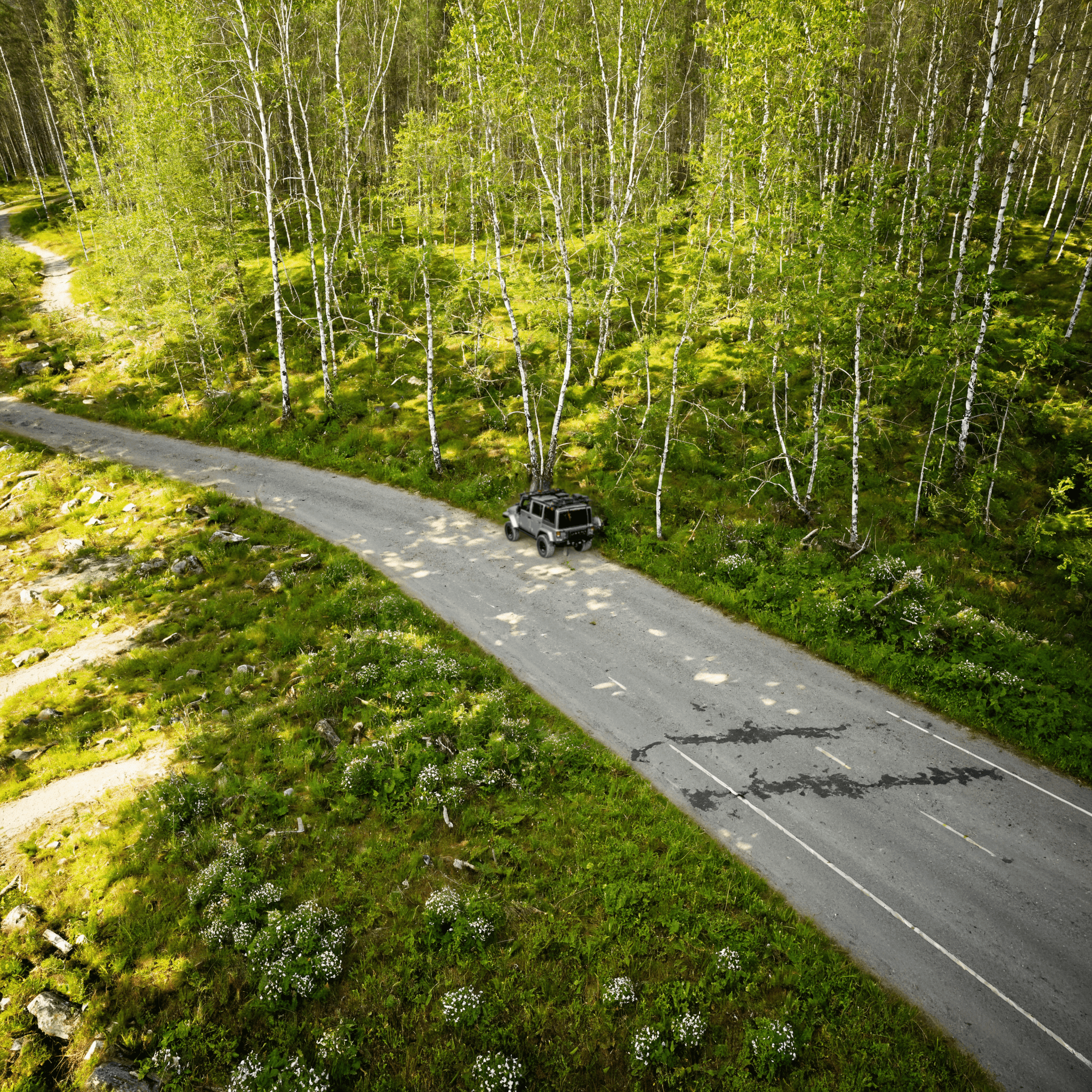

Drones can’t afford a trial-and-error approach to training. To master chaotic, low-altitude environments, autonomous UAVs need synthetic computer vision data that is engineered for entropy.

In the world of autonomous systems, drones represent a unique convergence of friction. Unlike a warehouse robot constrained to a 2D floor grid, a drone must navigate a relentless 3D matrix of changing variables: unpredictable wind currents, specular lighting glare on building surfaces, thin power lines that defy 2D sensors, and the chaotic movement of pedestrians or other aircraft.

For the AI models piloting these drones, the real world isn’t just noisy—it’s actively hostile to training data.

The traditional approach of manually collecting and labeling 2D video data for aerial autonomy has reached its limit. We are entering the era where the simulation is not just a safety net; it is the essential foundry for building robust drone intelligence.

Computer Vision (CV) models on drones are often tasked with "Semantic Segmentation" (identifying which pixels are buildings vs. sky) and "Object Detection" (spotting a power line or a person).

When you train a drone using only real-world footage, your model is limited by the environments you can legally (and safely) fly in. It learns to recognise "trees" on sunny days in clear airspace. It does not learn how a tree looks when obscured by a lens flare, shadowed by a cloud, or viewed through the interference of a propeller's motion blur.

This is where standard synthetic data has also traditionally failed. A perfectly rendered 3D simulation of an urban environment might look beautiful to a human, but it is "too clean" for an AI model. This discrepancy is known as the "Sim-to-Real Gap." When a model trained on perfect data encounters its first dusty sensor or poorly lit alleyway, its confidence drops and it cannot generalise its training.

At Repli5, we focus on High-Entropy Synthetic Data.

The goal is not to create a pretty picture; it is to stress-test the CV model by procedurally injecting the variables that cause physical models to fail. To create a robust autonomous drone, your synthetic training data must incorporate:

Lighting Anomalies: Don’t just simulate sun strength. Replicate the blinding glare on a metallic roof or the extreme contrast when a drone moves from bright sunlight into deep shade.

Sensor Noise & Motion Blur: The real world is rarely in sharp focus. Training data must include the expected effects of camera vibration and motion blur typically encountered when deployed.

Complex Occlusions: AI must learn to recognise an object even when it is only 20% visible. Our 3D environments offer contextual occlusions as well as weather effects like rain or fog to force the model to look beyond the obvious.

Perfect Physics-Based Labels: When training in simulation, every single frame can be automatically generated with perfect 2D or 3D bounding boxes, semantic maps, and depth information. This eliminates manual labelling errors and enables a scalable approach to prototype development by minimising upfront time and costs in annotation.

By training on high-fidelity synthetic environments built with a deep understanding of physical entropy, you are giving the perception model the resilience to thrive in the chaos it will inevitably encounter.

The future of low-altitude aerial autonomy will not be built on the back of manual data labelling. It will be built in the safety, scale, and complexity of simulations that dare to replicate the friction of the real world.